Industry Insights

Machine Vision Drives New Inroads into Electric Vehicle Manufacture

From a consumer standpoint, the emergence of electric vehicles (EVs) two decades ago changed the automotive landscape forever. From the perspective of machine vision, the impact of EVs has been more nuanced.

From a consumer standpoint, the emergence of electric vehicles (EVs) two decades ago changed the automotive landscape forever. From the perspective of machine vision, the impact of EVs has been more nuanced.

EVs may have introduced different parts to the automotive assembly line, including larger-scale batteries, new power electronic components, and electric motors. But while the parts have changed, the role of vision has not. Imaging systems still perform the same basic functions on the assembly line, whether that be identifying, gauging, or inspecting parts.

“Instead of looking at a big cylinder block made of aluminum, we’re looking at battery terminals and battery arrays or electric motors and coils,” says Steve Wardell at ATS Automation. “The parts have changed, but the inspections are answering similar questions: Is there a hole there and is it the right size? Are there scratches on that surface? Is there a barcode imprinted on that piece?”

But while EVs and hybrid electric vehicles are extensions of the automotive market — representing about 8% of global sales today, according to a recent report by Boston Consulting Group — they will account for a third of automotive sales by 2025 and surpass internal combustion engine (ICE) vehicles by 2030.

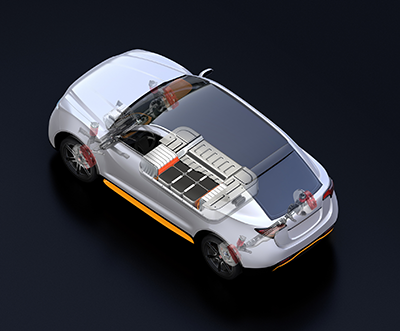

From the standpoint of vision technology, there are important differences between vehicles powered by batteries and those driven by ICEs. A conventional power train, for example, has about 1,400 components. Compare this to an EV’s drivetrain, which comprises about 200 parts. Fewer parts might suggest fewer vision applications per vehicle. But the evident simplicity of a finished electric drivetrain conceals the multiple steps required to manufacture it.

“To me, the batteries are the big differentiator between conventional and EV automobiles,” Wardell says. “EV batteries comprise multiple cells attached together into different configurations, and the design and manufacture of each of those cells is a separate industry with its own assembly processes and quality assurance methods.”

Additionally, the cells and modules that constitute EV batteries are fully encapsulated during final assembly. That can make it difficult to replace or repair a bad part, which puts pressure on vision systems to reliably capture defects throughout the four phases of EV battery production.

Inspecting Batteries

The lithium-ion batteries that power EVs are not only complex systems in their own right; they’re also divided into three categories — cylindrical, pouch, and prismatic — that share few unifying standards. But regardless of battery format, the electrode forms the basic building block and determines the cell’s energy density, cycle life, and safety.

Battery manufacture begins with the application of electrode materials onto copper and aluminum foils, and the dimensions of these coatings must be precise to enable the efficient flow of electricity. Area scan cameras are well suited to gauge the respective widths of the electrode, insulator, and metal foil, and can help manufacturers validate the accuracy and repeatability of their processes and correct issues early, before the electrode is rolled, wound, or stacked into a lithium-ion cell. Surface inspection using line scan cameras further detects decarburization, bubbling, and other defects at this stage of the process.

Individual cells in an EV battery contain a sealed compartment with electrodes, a separator, and electrolytes. During assembly, cells are either wound, rolled, or stacked, depending on battery design. Before electrode sheets are wound or stacked to form a cell, notches are made in the sheet’s copper and aluminum edges to create metal tabs. Misaligning these tabs by even a fraction of a millimeter can cause poor cell connections, which demands the use of high-resolution cameras to gauge the distance between them.

Rolled cells are enclosed in metal cylinders, which are then wrapped in a vinyl coating. Surface inspection is critical both before and after the wrap is applied. Both inspections, however, present challenges for conventional rules-based vision systems: Not only is the cylindrical surface of the cell difficult to image accurately with standard imagers, but standard vision systems are not very efficient at handling the visual variance in contaminants and defects. As a result, cylinder inspection became one of the early applications for deep learning technology, which can be trained to locate surface defects and anomalies on the sides, tops, and bottoms of cylinder batteries while ignoring irrelevant variations.

Applications like this have fueled the automotive industry’s growing interest in deep learning tools and have prompted vision suppliers like Cognex to make them more intuitive to use. With this end in mind, the company recently launched its D900 series, which combines its ViDi solution and In-Sight vision tools inside an intuitively configurable smart camera.

“The D900 series is tailor-made for almost any surface inspection in the EV market,” says Lou Hedtke, global account manager, automotive, at Cognex. “What sets it apart from AI solutions like Google’s Quantiphi or IBM’s Watson is its ease of use. The D900 saves images directly to the smart camera, which allows users to quickly train the neural network to identify defects that conventional vision systems would struggle to detect. The practical benefit is time savings. In most cases, Cognex ViDi only needs hundreds or occasionally thousands of images to work effectively.”

Defining a Good Weld

Weld inspection is one of the applications common to both ICE and EV manufacturing processes. But EVs present some unique demands and criteria for what constitutes a good weld.

“I would say the tolerances of bead inspection have gone up tenfold for some EV applications,” says Hedtke. “If you’re looking at a bead on an automotive frame, the criteria might be whether the weld seam has the right volume. It’s looking for absence/presence? But the challenges are much harder for some EV applications.”

One example is the welding of hairpin stators. Stators are found in the alternators of conventional vehicles as well. But hairpin stators form the core of an EV’s motor. That makes them more critical to vehicle performance, and it is a much more expensive proposition to repair or replace a defective motor than to discard a defective alternator.

A combination of imagers is applied before, during, and after the laser welding process that joins the two copper rods into a hairpin configuration. Cognex developed one system that uses its 3D-A5000 area scan camera to help focus a laser on the point where the end of each copper rod meets the other. The system also incorporates 2D GigE and line scan cameras from Cognex to inspect post-weld quality during the assembly process.

The ideal would be to inspect the welding process while it’s underway, says Hedtke. But it is challenging enough for vision systems just to verify hairpin stator welds. “What is a good weld?” he asks. “There’s no clearly defined benchmark, and you really have the one opportunity to verify that process. Once the system is encapsulated, you don’t tear it apart unless it’s for safety purposes.”

The difficulty of benchmarking a good weld has created a bit of a tennis match between EV manufacturers and their vision suppliers. It’s typically the OEM’s role to define the acceptable level of porosity in a weld, for example, so that an integrator or supplier can identify a solution. But EV manufacturers are instead seeking guidance on a suitable benchmark by asking what level of porosity vision systems can accurately identify.

The threshold may ultimately be shaped by the automaker’s tolerance for cost. “When [EV manufacturers] ask, ‘What’s the smallest tolerance of porosity you can identify?’ the answer is that we can qualify porosity of a weld that’s on the moon. But you might have to couple a high-resolution camera to the Hubble Telescope.”

Here too, deep learning may help by leveraging the knowledge of human operators to train vision systems on what a good weld looks like.

“If you’re a welder or someone familiar with the process, you can look at a seam and say that is or is not a good solder, or that little pit won’t affect the quality of the weld,” says Wardell. “Unfortunately, there’s no stock dataset that allows you to drop in an algorithm and go. Each application will need time to define, build, categorize, and train a dataset specific to that application.”

Solutions like ATS’s Illuminate visual inspection platform are designed to help this transition from human operators to machine vision. In the context of a welding application, for example, an Illuminate-enabled imaging system would work in tandem with a live expert, who would review acquired images of the seam and initially flag defects for the system. “Over time, the accumulation of categorized images, human input, and application of deep learning algorithms enables the vision system to match or exceed the performance of the human operator, at which point inspections can be fully automated,” says Wardell.

The Road Ahead

The growth of the EV market represents unique opportunities for vision suppliers and integrators to grow their businesses, which helps explain why companies like Cognex and ATS have begun to proactively target manufacturers in the space. And while vision technology often performs the same basic functions on assembly lines for electric and ICE vehicles, EVs present a handful of uniquely challenging vision applications — especially on upstream battery assembly lines. Lastly, the enclosed nature of cells, batteries, and even the EV drivetrain puts additional pressure on vision and other quality assurance systems to detect flaws early in the process to minimize costly rework and waste. All of these factors are likely to drive growing interest in vision technology among EV manufacturers and to offer new opportunities for suppliers and integrators prepared to provide solutions.

AIA

AIA - Advancing Vision + Imaging has transformed into the Association for Advancing Automation, the leading global automation trade association of the vision + imaging, robotics, motion control, and industrial AI industries.

Discover how AIA can support your automation journey with their complete range of solutions and expertise.

Visit Company WebsiteSB-244 DIN Rail PC Series: A Compact, High-Performance Industrial Computing Solution

Our latest DIN Rail PC series, the SB-244 Series, is designed to deliver exceptional performance, expandability, and flexibility in industrial

robominds calls Piab's MX suction cup the super cup

According to robominds, the latest vacuum technology product from Piab has earned this nickname because it simply lifts anything - regardless of the material, geometry, or surface structu

How SMEs in the Know Win with Automation

SMEs represent the majority of businesses that have yet to realize the advantages of automation and robotics.