Industry Insights

Cybersecurity Practices for the Digital Factory

Learn strategies for embracing digitalization while protecting the OT network

2020 was a year of turbulence worldwide, and industrial automation, particularly manufacturing, was no exception. The social distancing and “safer at home” requirements imposed by COVID-19 brought home the value of remote access and digitalization¾the use of digital data to improve productivity, product quality, and profitability. Today’s factory is more connected than ever at the machine level, the plant level, the organizational level, and up to the cloud. Data is on the move and operations depend upon the ability of the multitude of devices in the industrial Internet of things (IIoT) to communicate.

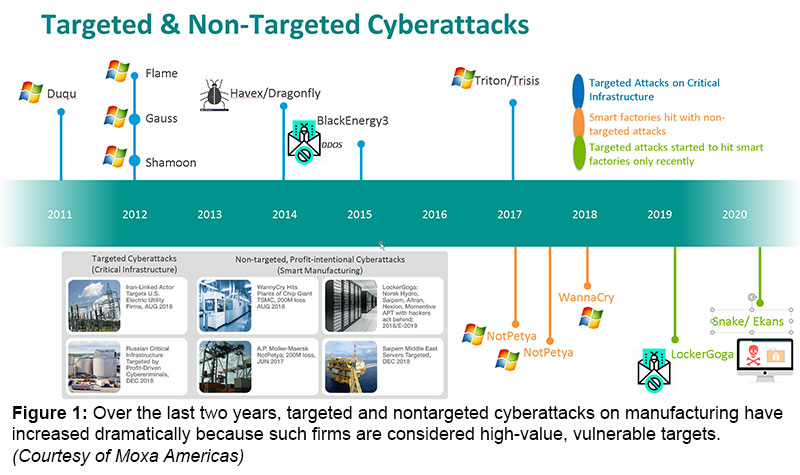

Unfortunately, 2020 brought another digital trend to automation: a rapid increase in the number of cyberattacks on manufacturing targets (see Figure 1). According to Beazley Group, ransomware attacks on manufacturing jumped 156% quarter over quarter from Q4 2019 to Q1 2020, while data from Trend Micro shows Q3 2020 attacks on manufacturing dwarfed all other sectors, including government and healthcare. ”Threats actors target manufacturing organizations because of the often time-sensitive nature of production,” says a recent report from Kivu consulting. “Manufacturing entities also commonly fail to invest in IT services which leaves them vulnerable to attacks.”

By gaining unauthorized access to networks and devices, hackers can steal IP, disrupt operations, compromise safety, damage equipment, and impact product quality. Given today’s connected enterprise, attacking the network at one plant may provide the ability to access all. And the risk doesn’t just come through a connection to the Internet. In the recent SolarWinds hack, attackers embedded malicious code in a widely used application, penetrating customer systems by loading itself during the installation of legitimate patches. The attack highlights the risks of even trusted¾and seemingly trustworthy¾software. The question is no longer whether to expose the OT network and OT assets. The question is whether that exposure will be intentional and secure or unintentional and unprotected.

The Challenges of the OT Network

For the first few decades of the Internet, the operational technology (OT) network was separated from the IT network and the Internet. The goal of this so-called airgap was to protect the industrial control system (ICS) and related assets from incursions originating in the business network. As a result of the evolving enterprise network architecture, however, this air gap is no longer effective. The threat comes not only from ICS assets accessed remotely over industrial Ethernet and the Internet, but from other OT networks that may have been compromised by attackers.

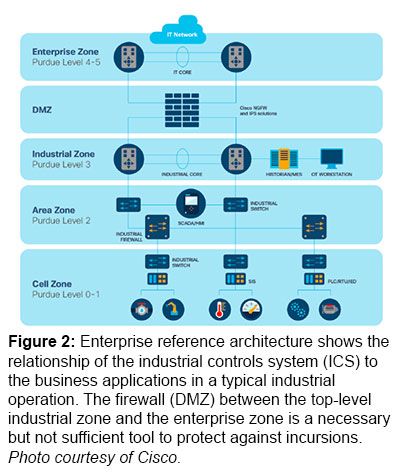

The Purdue Enterprise Reference Architecture (PERA) is a multilayer hierarchy that establishes the levels of an industrial operation involving an ICS. They are:

- Level 0: Physical layer (e.g., motors, actuators, sensors)

- Level I: Intelligent devices (e.g., PLCs, motion controllers, smart drives, etc.)

- Level 2: Supervisory control layer (e.g. HMIs, SCADA software, etc.)

- Level 3: Manufacturing operations systems (e.g., MES, maintenance, data historians, etc.)

- Level 4: Enterprise network and applications (e.g. ERP, intranet, office applications, etc.)

ISA95/IEC-62264 map those layers to a zone architecture that incorporates the classic air gap in the form of a demilitarized zone (DMZ; see Figure 2):

The businesses and industries that use paper to pass orders, workflows, and recipes from the top floor to the shop floor are dwindling fast. Enterprises are connected, and the airgap of the DMZ is a necessary, but not sufficient tool to protect against incursions. Even having firewalls between each level won’t protect against horizontal threats. “These days, nobody can do their work without accessing the internet,” says Ruben Lobo, product line manager for industrial switching and IoT security at Cisco (Raleigh-Durham, North Carolina). “The DMZ is very porous, so the threats from the inside may be even worse than the threats from the outside.”

The businesses and industries that use paper to pass orders, workflows, and recipes from the top floor to the shop floor are dwindling fast. Enterprises are connected, and the airgap of the DMZ is a necessary, but not sufficient tool to protect against incursions. Even having firewalls between each level won’t protect against horizontal threats. “These days, nobody can do their work without accessing the internet,” says Ruben Lobo, product line manager for industrial switching and IoT security at Cisco (Raleigh-Durham, North Carolina). “The DMZ is very porous, so the threats from the inside may be even worse than the threats from the outside.”

For an example of an inside threat, look no further than the system with the ultimate airgap¾the International Space Station. Its systems have been hacked multiple times over the years, both as a result of astronauts bringing infected laptops on board and, more recently, via algorithms harvested from the theft of an unencrypted notebook computer.

“Most of the malware and ransomware [that attacks the OT network] is just the same type that’s found in the traditional IT world,” says Lobo. “Maybe the PC that the control engineer is using to program the PLCs gets attacked first from something downloaded from the Internet, and that infects your operator workstation.” After all, a hacker doesn’t need to understand the code of a PLC if they can encrypt the graphics files on the PC for the HMI that runs the machine. The end result is the same: production stops until the files are released.

That is not to say that individual smart devices aren’t also vulnerable. The ever-expanding IIoT and the emphasis on digitalization has markedly increased the attack surface of the modern factory. PLCs, motion controllers, drives, encoders, and HMIs all have software, firmware, and operating systems. They may be linked to data historians or other storage devices with installed databases. There was a time when edge devices were dedicated servers that could introduce an extra layer of protection. Today, nearly any intelligent device can act as an edge device, bringing with it the risks of incursion by hackers. Meanwhile, third-party vendors like OEMs, integrators, and reliability technicians may have set up backdoors to access the machine for troubleshooting and maintenance.

Further complicating matters is the fact that most industrial facilities grow up piecemeal, with standalone equipment running on a fieldbus that began getting connected to a broader plant-wide system, and eventually to the enterprise and the Internet. Greenfield factories are very much the exception rather than the rule, and while the electronics on most lines are upgraded every five to 10 years, many older facilities have to contend with outdated equipment in legacy systems. Computers running Windows XP or even Windows NT are not unheard of, even though neither OS has been supported for years. "You've got a lot of equipment out there on the floor that was never meant to be connected to the internet,” says Richard Wood, Product Marketing Division Manager for Moxa Americas (Brea, California). “This equipment may be outdated, unpatched, have known vulnerabilities, or simply no capacity to protect itself.”

The connected factory presents a multitude of cybersecurity challenges. Fortunately, there are well-established techniques organizations can use to progress from vulnerable architectures to more secure postures. The progress includes mapping connected devices across the enterprise, segmenting the network to limit incursions, monitoring traffic to identify threats inside these networks segments, and codifying response, both organizationally and procedurally.

Governance

A successful cybersecurity initiative must be built on a foundation of governance. Only with an effective, detailed process in place can the organization actually implement a program that will protect the organization and its assets.

An organization needs to determine its risk tolerance and strategic imperatives in order to align its cybersecurity efforts with the enterprise as a whole. The governance model needs to establish fixed policies and rules for enforcing them, not just in terms of connected devices but also people and procedures.

Devices

The core of any cybersecurity initiative is device-level policy. It should categorize network devices based on associated risk and specify how to configure them, including patches and upgrades.

Policies should cover the lifetime of the devices, beginning with secure procurement. “You want to understand the risks of the devices that you plan to purchase,” says Wendy Frank, Deloitte’s Cyber 5G leader. This includes assessing the vendor’s cybersecurity program implementation. “Does their program follow comprehensive standards such as NIST and ISA/IEC 62443? Are they performing security and privacy risk assessments of their devices and addressing vulnerabilities? Are they conducting proper testing? What are their contractual terms? If they're promising certain levels of security and privacy, try to get that put into your contract with them.”

Any general compute devices should be industrially hardened, with any unnecessary applications stripped off and any unused ports and services disabled. Each device needs its own unique IP address. Factory usernames and passwords should be replaced by fresh usernames and strong passwords. This is particularly important for automation components like PLCs and controllers, a surprising number of which are shipped without security provisions activated.

Part of effective governance is building procedures for keeping software patches up to date. Automatically downloading and installing patches can be problematic, however. There is the previously noted example of malware embedded in a patch from a trusted source. Even if the patch is safe, however, it can still cause other devices in the network to crash. A better approach is to use tools to receive automatic notifications of new patches but test and validate prior to installing in the production system. “We recommend that people subscribe to ICS-CERT (see sidebar) for vulnerability alerts,” says Wood. “Most reputable manufacturers will have an RSS feed or email notification for updates on the devices they sell, so contact your vendor to make sure your automatically getting notified of upgrades and patches in some way. If that’s not possible, set up a periodic schedule for checking those devices.”

Finally, good governance includes secure disposal at end of life, particularly in the case of general compute products. If devices aren’t put through an appropriate process, the result could be the unintentional release of sensitive data.

Authentication and Users

Firmware updates and security patches may cause concerns, but often, the biggest risk is not software or firmware but peopleware. Examples abound, from passwords on Post-It notes stuck on the HMI screen to the operator who plugs their infected smart phone into an open USB port on an industrial PC, not realizing that the charge cable is not just a power connection but a data connection. The scenario of an engineer picking up memory stick in the parking lot and infecting their laptop (and the network) with malware has become a cliché but that doesn’t mean it isn’t a risk.

Cybersecurity policy should follow best practices. Eliminate shared credentials. Each user should have a unique username and password. Apply role-based permissioning, based on the principle of least privilege¾give users access only to the data and functions they need to accomplish their tasks. Change passwords regularly and whenever possible, use multifactor authentication. These techniques should be straightforward to apply if IT and OT are working closely on security. The IT department will already have an authentication server set up for the enterprise zone; it’s easy enough to set up something similar for the OT network. The approach also simplifies forensics. If there’s a problem, it’s possible to identify the individuals who accessed the network at a certain time or changed the configuration on a specific device. “It gives you the forensics capabilities need to really track down what happened if there is a breach,” says Wood.

Authentication needs to be accompanied by employee training. Many highly publicized data breaches have come about as a result of simple user mistakes that could easily have been avoided with greater awareness. The PCs and mobile devices of staff in engineering and maintenance, for example, may have a direct connection to the OT network. A phishing attack on their email will do just as effective a job of infecting the area zone as it will the enterprise zone. Invest time and effort into education. Use a different device to interface with OT devices and networks than for business applications like email.

IT and OT

Cybersecurity has traditionally been the province of the IT shop, focusing on business systems in the enterprise zone. The traditional challenge in managing OT cybersecurity through IT is that the devices on the manufacturing floor are fundamentally different in communications flow and function from conventional networking equipment. The misunderstandings that arise from these differences can prove catastrophic. "For effective operational security, you really need to leverage OT standards such as ISA/IEC 62443, and understand the manufacturing floor, the ops and the specific devices, which is an area where the IT staff who are typically responsible for cybersecurity usually have not been trained,” says Frank.

“The projects that fail typically do so because somebody’s trying to implement some kind of security measure without understanding the process and actually ends up blocking legitimate flows," says Lobo. It’s not that the IT department needs to understand how to program a PLC, or even what a PLC is. They do need to understand the process in terms of which devices need to communicate and over what time frames. “They need to have a context. They need to know that this PLC is supposed to be talking to this IO, and the PLCs on these two lines are supposed to be talking to each other, so network segmentation they should not block it. “

Recognizing the importance of security, companies are increasingly establishing the position of chief information security officer (CISO). The CISO spearheads the governance process, codifies cooperation and priorities, and monitors the platform and traffic for threats. In the case of the OT network protection, they oversee both IT and the line of business (OT). Having this extra layer, when possible, streamlines the process and optimizes results.

Getting Started

Once an effective cybersecurity policy has been established, it actually needs to be implemented. There are four key steps involved.

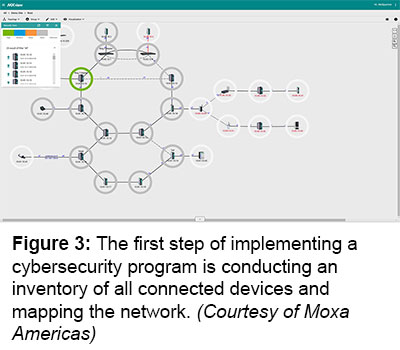

Step #1: Network Visibility

The essential starting point for protecting the network is to discover all connected assets, determining where they are connected and how they communicate with each other (see figure 3). Finally there are tools and approaches available that monitor and inventory all devices on the network -- IT and OT -- and provide a detailed network architecture. “I can't stress enough the importance of asset visibility and management,” says Frank. “Organizations need to be able to say, ‘Here's, the list of devices in our network. Here’s the version of software they're running and these are the manufacturers.’ Unfortunately, in most cases, they can’t.” This information is crucial to tracking known vulnerabilities and remediating them later. It is used to coordinate maintenance of the endpoints to determine what devices need to be updated and whether there are issues communicating between devices and control systems.

This critical first step can lead to surprises. “It is pretty common during that process to turn up connections that the security team didn’t know existed,” says Wood. “Maybe someone set up a wireless access point so that they could sit at their desk and directly access the plant network, or an OEM put a device on their machine that was meant to give them access to just their equipment. But because they didn’t isolate that device from the network using a firewall or some sort of network segmentation, that seemingly innocent connection to one asset now becomes a remote connection to the entire network.”

This critical first step can lead to surprises. “It is pretty common during that process to turn up connections that the security team didn’t know existed,” says Wood. “Maybe someone set up a wireless access point so that they could sit at their desk and directly access the plant network, or an OEM put a device on their machine that was meant to give them access to just their equipment. But because they didn’t isolate that device from the network using a firewall or some sort of network segmentation, that seemingly innocent connection to one asset now becomes a remote connection to the entire network.”

These hidden devices often represent a high level of vulnerability, either because they are not configurable to even rudimentary standards or they are outdated and have known issues. On the upside, once they’ve been identified, they can be prioritized for upgrade.

When the inventory is complete, the next step is to assess each device to determine whether it’s properly configured. Go to the manufacturer’s website and check for firmware updates. Investigate whether the device has any known vulnerabilities. Essentially, perform the due diligence that should have been done at the point of acquisition and installation.

To identify all assets and where they connect to the network, and how they communicate with each other requires a combination of passive and active discovery. Passive discovery involves deep packet inspection of the traffic. The challenge is that most of the I/O traffic passes between I/O points and the PLC, which means that it rarely leaves level I. For passive discovery to identify all of the connected devices at the machine level, deep packet inspection needs to take place at the lowest levels of the network model. “If you’re inspecting the traffic too high at the aggregation layer, you’re probably going to see less than 20% of what’s going on,” says Lobo. “Some customers see that 20% and they have a false sense of security, thinking they’ve got visibility. But, really, you need to get to 100%, so you need to go all the way down to the bottom of the plant floor. How do you do it cost effectively is the most challenging thing right now.”

Active discovery uses a tool to actively scan the network. It can be an effective approach but has been somewhat controversial. Legacy equipment sometimes crashes during active discovery, which has led some users to blame the technology. That’s not the case, however, says Lobo. “There are a lot of misconceptions in the community about active discovery,” says Lobo. “It’s not the active discovery that is bringing down the control system, it’s because the network is very poorly designed and the act of doing an active discovery leads to a sequence of events that causes certain legacy devices to crash. If active discovery is being done in a poorly designed network environment, there is a certain way in which you have to do it. That aspect is not very well understood by the customer base.” The technique is gaining acceptance now, with an increasing number of vendors offering active discovery tools. It’s an important advance, in Lobo’s view. “To truly get to 100% visibility, you need to do a combination of active and passive discovery.”

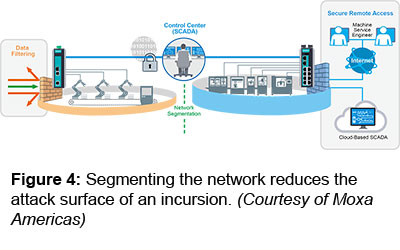

Step #2: Network Segmentation

Once the visibility process has been completed and the devices properly configured and patched, the next step is network segmentation. Network segmentation helps limit the reach of attacks and can also be used to sequester vulnerable assets (see figure 4). The DMZ is the primary example of segmentation, using firewalls to separate the IT network from the OT network. The DMC can be effective to block outside threats but it would be a mistake to assume that this makes the network safe. If the goal is truly to prevent downtime and losses because of a cyber-attack, then the OT network below the DMC needs to be segmented to protect from internal threats.

Start with macro segmentation. A factory with 10 assembly lines, for example, might be divided into 10 segments. If something happens to segment 1, it won’t spread to segment 2. And then progress to micro segmentation when appropriate. Segmentation needs to be done with input from both IT and OT to ensure that it does not introduce latency in time-sensitive processes. A firewall shouldn’t be placed between a PLC and I/O that provides feedback for a control loop, for example. If it’s essential to segment that part of the network, access control lists (ACLs – white listing) can be used to enforce segmentation at the line rate, protecting the assets without impeding communications. Once everything is working smoothly with the macro segmentation, the next step is to create microsegments within an assembly line using ACLs and switches.

Start with macro segmentation. A factory with 10 assembly lines, for example, might be divided into 10 segments. If something happens to segment 1, it won’t spread to segment 2. And then progress to micro segmentation when appropriate. Segmentation needs to be done with input from both IT and OT to ensure that it does not introduce latency in time-sensitive processes. A firewall shouldn’t be placed between a PLC and I/O that provides feedback for a control loop, for example. If it’s essential to segment that part of the network, access control lists (ACLs – white listing) can be used to enforce segmentation at the line rate, protecting the assets without impeding communications. Once everything is working smoothly with the macro segmentation, the next step is to create microsegments within an assembly line using ACLs and switches.

Segmentation provides a solution for mitigating risk from legacy networks running devices with known vulnerabilities, such as those Windows XP machines, for example. If they are sequestered in a separate network, they can’t put other devices at risk. They can also be fitted with intrusion protection devices that can filter incoming traffic for known vulnerabilities and threats.

Step #3: Network and Device Monitoring

Once the process of inventorying, configuring and updating devices, and segmenting the network have taken place, the real work begins. Traffic moving across the network needs to be monitored constantly in each segment to detect anomalies and threats. A good tool for this task is a Security Incident Event Management (SIEM) system, which aggregates data from across the network to centralize analysis and reporting while eliminating false positives.

“The monitoring piece is super important. This is where a lot of companies get into trouble because they don't first have that asset inventory to be able to monitor,” says Frank. “It’s essential to have a threat intelligence program to gather and analyze the data for both internal threats and external threats so you can provide better response, have effective monitoring of your OT devices, and manage those risks proactively.”

In one implementation, a switch at level 2 copies the traffic and sends a stream to a sensor that decodes the communications protocol and feeds the live stream to a server appliance at level 3. The appliance uses analytics to detect anomalies. If an HMI that typically reads from a PLC suddenly writes to it, for example, the system would detect it and send an alert. It would look for sudden changes in traffic volume, changes in protocol, anything unusual. It would also run signature-based detection to catch any known threats.

Step #4: Response

Even the best threat detection system is ineffective without the ability to act on that information. This step involves the Security Operations Center (SOC), which reports to the office of the CISO. The SOC is staffed by experts who coordinate responses to anomalies. Some types of threats can be handled with software. Threats that are common in the IT world can be addressed by the experts in the SOC. The OT network presents other types of challenges, however.

If a control engineer writes a new program to the motion controller on machine, the SIEM might promote it to the SOC team. The question is what happens next. “The IT person sitting up in the SOC may not even know what a PLC is,” says Lobo. “So, you need a feedback loop between the folks on the plant floor and the SOC. They could get in touch with the OT user in that particular plant and say, “Hey, should I be concerned about this? Did you push this program down to the PLC or is this something that you didn’t expect?” Most likely, you just want to promote signature-based threats to the sock and then allow the user on the plant floor to promote anomalies to the control system for investigations because otherwise there would be too many false positives.”

Finally, the organization needs mechanisms in place for recovery and remediation. Ensure that all critical system files are backed up, ideally to a public or private cloud. Finding the threat is not sufficient, however. It needs to be removed from the network environment and the system needs to be returned to operations. This isn’t always within the skill set of in-house team members, so make sure that access to outside expertise is set up as part of the organization’s policies and procedures.

Cyberattacks in manufacturing and other industrial sectors are on the rise. Organizations need to establish a comprehensive strategy to protect themselves. Start with proper governance. Once a strategy is in place, follow the steps of discovery, device protection, network segmentation, and network and device monitoring. Be sure to have a response team in place to make decisions when anomalies are discovered and take action. Finally, and perhaps most important, don’t neglect training. The human factor is probably the greatest vulnerability of all. Make sure staff is trained in proper procedures and regularly reminded of their role in helping protect the organization. Most of all, no matter what the size of the organization, address the issue, and do it now. “Developing a security policy and enforcing that policy out on the network can be involved,” says Wood. “but failing to do it is just like not locking your front door when you leave your house.”

Motion Control & Motor Association

The Motion Control and Motor Association (MCMA) – the most trusted resource for motion control information, education, and events – has transformed into the Association for Advancing Automation.

Discover how Motion Control & Motor Association can support your automation journey with their complete range of solutions and expertise.

Visit Company WebsitePartnership between RoboDK and KEBA Industrial Automation

The integration of KEBA Robotik KeMotion in RoboDK simplifies the complete life cycle of a process solution with integrated robotics

Compact AHS/AHM36 Absolute Encoders Fit in Nearly Any Application Space

Rotating connector and cable outlet provide flexible installation.