Industry Insights

Ethics in Autonomous Industrial AI: Tackling Bias and Data Privacy

As artificial intelligence (AI) applications proliferate in industrial automation and robotic applications, we are confronted with a series of critical questions:

Can we trust the decisions that an AI system makes? Are those decisions free of bias and ethically sound? Do we know how or why an autonomous agent has reached a conclusion? And do we know that the data that’s collected--especially personal data--is kept private and secure?

A field of study called AI ethics aims to guide the development of AI systems that can both maximize the value of the technology and help answer these questions.

Research and advisory firm Forrester identifies five principles of AI ethics: fairness and bias; trust and transparency; accountability; social benefit; and privacy and security.

In this article, we look specifically at the principles of reducing bias and ensuring data privacy. To explore them in the context of autonomous industrial AI, including a discussion of best practices and standards for ethical use of AI, we sought guidance from industrial AI experts at Cogniteam, Cybernetix Ventures, and Elementary.

What is bias in industrial AI?

Outside of industry, AI bias usually refers to a situation where an AI system produces results that discriminate against a given demographic group. By contrast, AI bias in an industrial setting manifests itself in other ways. Some common examples include the inability of a grading system to separate high-quality from low-quality items, the inability of a mobile robot to navigate a warehouse, an offensive gesture expressed by a collaborative robot (cobot) toward a person, and the failure of a robot to identify the presence of a nearby individual. The last example is particularly important as it can put human safety at risk.

AI systems typically require training on an exceptionally large dataset that provides examples for the AI model to learn from.

“AI bias is usually caused by inadequate training or incomplete training datasets, such as images that fail to reflect the full diversity of real-world lighting conditions or corner use cases that are unique to a given process,” explains Mark Martin, co-founder and general partner of Cybernetix Ventures, which focuses on early-stage robotics, automation, and AI startups.

Yehuda Elmaliah, CEO at Cogniteam, the maker of a cloud robotics AI platform, provides the following example: If an AI system were trained on a dataset that lacked images of workers wearing eyeglasses, the system could easily fail to recognize bespectacled workers, consequently it may fail to open a door or provide safety instruction.

Types of bias in industrial AI

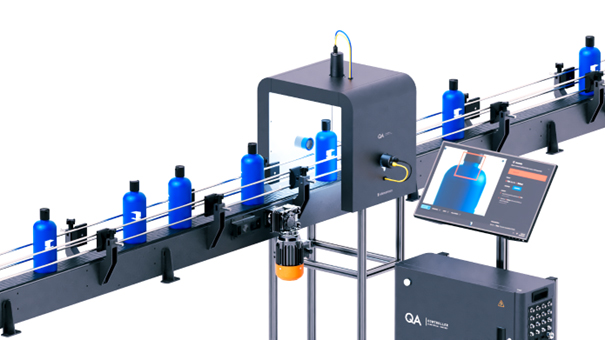

As in other industries, in industrial AI there is intentional and unintentional bias. Intentional bias is a cornerstone of a successful AI solution, explains Mike Bruchanski, vice president of product at Elementary, maker of an AI and quality assurance platform. “If you are building an autonomous vehicle, it makes sense to create an AI model that assigns greater weight to features that are found in roadway safety signage, such as the color red used on stop signs,” he says. “Or in a manufacturing facility that produces bottles of shampoo, biasing an AI system toward finding bottles that lack caps could save a lot of spills.”

Unintentional bias, by contrast, can result in poor decision-making by AI systems. Four types of unintentional bias in AI are data-driven bias, label bias, cultural bias, and confirmation bias.

Unintentional bias, by contrast, can result in poor decision-making by AI systems. Four types of unintentional bias in AI are data-driven bias, label bias, cultural bias, and confirmation bias.

Data-driven bias: Arises from a lack of diversity in an AI training dataset. For example, training on a spoken dataset that fails to include the full range of English accents could result in a robot that responds differently for an operator who is a native English speaker versus someone for whom English is a second language. Similarly, if a factory quality-assurance application is trained on a dataset that is too small, it can fail to identify types of defects that were not included in the dataset. “Data-driven bias can be mitigated with clear sample size requirements before deciding an AI model is ready for production,” explains Edward Chan, senior product manager at Elementary.

Label bias: Emerges when a worker or AI system that categorizes or writes descriptions of each element in a training dataset is personally biased, which then spreads their human bias to an AI system that learns from the mislabeled dataset. Label bias could result in an emergency stop mechanism failing to recognize a hazardous situation. In quality assurance, label bias is usually mitigated with a manufacturer’s quality control plan, Chan says. The plan outlines and describes the standards for passing and failing products in detail and includes images and metrics.

Cultural bias: Cultures vary as to what language and behavior is deemed acceptable or offensive. Cultural bias arises when the training dataset fails to reflect a real-world variation of cultural norms. This type of AI bias may appear in human-robot interactions, such as when a robot interprets operator input or uses gestures to demonstrate gratitude. “While cultural bias is less of an issue in quality assurance, one notable example is that certain cultures, Japan, for example, are more sensitive to cosmetic defects,” Chan says. This bias should be considered when implementing such systems and thinking about purchasing behavior in certain regions.

Confirmation bias: Occurs when an AI solution delivers skewed results that align over time with an operator’s preferred subset of results—to the detriment of accuracy and good decision-making. “This type of bias is caused by the operator’s tendency to seek out and confirm results they already believe, and discard or dismiss results they don’t instinctively agree with,” Chan explains. Confirmation bias can be reduced by ensuring sufficient data in the model and employing labeling and operational practices that are grounded in best practices.

Data privacy in industrial AI

AI solutions also can go astray in their use of personal or corporate data that is private. Companies need to make sure their confidential data and intellectual property are secure. Also, companies much exercise care when accessing, using, and storing personal healthcare and consumer data.

The potential for problems flows two ways. Like generative AI systems that vacuum all accessible content, from the global internet, including copyrighted material, an AI model trained by a vendor may be based on training datasets that contain unlicensed IP. Likewise, a company’s proprietary datasets or AI model could end up in another company’s possession through a machine-learning application.

Industrial AI applications need to respect IP and copyright protection when training on someone else’s data. “This is an issue that will be litigated, and we’ll see where it lands,” Martin explains. “Legal precedent would indicate that developers of AI/ML applications need to accept that not all data is freely accessible. When there is ownership, you need to gain the rights and clearance before you use the data.”

While less common in an industrial facility, the personal identifiable information (PII) of individuals also requires protection. An AI-based worker-monitoring system could gather PII, including video images of workers. Similarly, personal data read from a badge barcode could be mislabeled as public when it is in fact private.

Where does cybersecurity fit in?

Hackers could attack AI models using cyberattack vectors and social engineering to exploit AI bias. Like security threats that target connected smart devices, bad actors can remotely control IoT sensors and robots through cloud-based connections. "Vulnerabilities have already been identified in several types of IoT devices. It is not a question of if, but when there will be a major breach of an AI or robotics solution,” Martin says.

A hacker who attacks an AI model could bias the robot’s behavior by exploiting bias to produce unexpected results. “Like an untrained human resources worker who gives out private information to someone posing as the CEO in a phishing attack, social engineering an autonomous robot could drive unwanted behavior, such as giving out private information or creating a safety hazard,” Bruchanski says.

Best practices to reduce AI bias and improve data security

Martin explains that the best way to overcome bias in artificial intelligence applications is to use human intelligence by way of diverse teams, industry collaboration, and audits. Most important, have a diverse human team write the code and test it.

“If an application development team consists of people of different backgrounds, they are more likely to uncover sources of bias because they have a greater variety of experiences,” Martin says. “Lack of diversity has been a problem in the field of robotics. To address the problem, companies and educators need to start downstream in middle school and high school to promote STEM to students of all backgrounds and thereby increase the talent pool.”

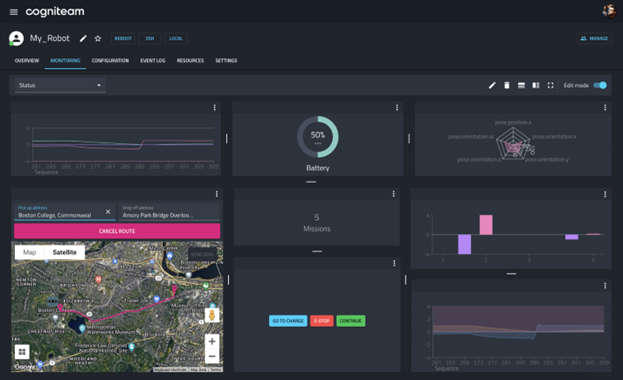

By extension, industry groups can identify and share ways to avoid intentional bias in industrial AI. Beyond NIST standards, Capability Maturity Model Integration (CMMI) process improvements, Europe’s General Data Protection Regulation (GDPR), California Consumer Privacy Act (CCPA) protections, and Google’s “Don’t Be Evil,” there are very few ethical AI standards and regulations that apply to autonomous industrial AI. “To fill the gap, A3 is setting up an AI working group to focus on artificial intelligence—and I think that’s a great idea,” Martin says. “Like every other sector, industrial AI needs independent groups that bring together diverse people to achieve industry consensus.” Software could also help facilitate quality control and auditing, looking for instances of bias and gaps in the handling of data. Elmaliah recommends putting a person at vital points in the decision loop as one of the best ways to catch bias in an AI application. “Developers can set up notification rules where the robot notifies a person when something is wrong. Sometimes you need a person to say, ‘Yes’ or ‘No,’ instead of letting AI make a crucial decision.”

He adds that it is common to see a degradation in the abilities of a trained AI application over time. “Monitoring enables a person to identify a wrong inference and feed the AI model correct data to improve its training.” In other words, send the AI robot back to school for refresher training.

It is helpful to put a person in the loop also when developing human-robot interactions (HRI).

It is helpful to put a person in the loop also when developing human-robot interactions (HRI).

“We put a programmer in the loop to monitor and understand if an AI system is working correctly or not,” Elmaliah says. “This way we can identify bias, find anomalies, and correct them, in a feedback loop.”

Training on diverse data sets, factory acceptance testing, and emphasizing physical security are three more ways to mitigate AI bias, explains Bruchanski. “AI/ML systems need a lot of data, and that data needs to capture all the permutations and edge cases,” he says. “Think about training an AI model like you would train a person. You want the AI model to be in the best position to decide, so you need to give it diverse data from which it can make good decisions.”

Once you think it is ready, watch the machine and see what happens—run it through its paces as part of factory acceptance testing, Bruchanski recommends. “Although AI/ML is new, robots in automated and autonomous industrial manufacturing environments have existed for a long time, and factory acceptance testing is a standard method of validating the behaviors of automated systems—whether a quality inspection system, an arm, a robot, or even a dark factory, also called a ‘lights-out factory,’ which runs alone without human intervention onsite,” he explains. “This same approach works for AI systems.”

At the same time, don’t forget the basics that apply to securing and protecting any type of industrial software: physically secure the facility and check for cyber risks. Physical and cyber security helps keep out malicious actors.

“With industrial automation that isn’t connected to the cloud, you have an air gap, a layer of physical security in the facility,” Bruchanski explains. “That doesn’t eliminate the need for cybersecurity, however, so we use a third-party service that evaluates the security of our AI models.”

Also, keep all software up to date, including AI applications. Software updates are as important as ever to minimize bias and data privacy issues in AI, urges Elmaliah. “Don’t leave a system without a plan and mechanism for updating it with new data from the environment,” he says. “Our AI trained models talk to the operator natively, so we design the robot to learn continuously to eliminate bias.”

Unintentional bias in industrial automation AI solutions can create inefficiencies, safety hazards, and risks to human safety and a company’s bottom line. AI ethics may not be what most people think of as “ethics” but nonetheless takes deliberate effort to get right. When conscientious developers work to avoid unintentional bias and privacy breaches, AI applications can stay up to date, bias free, and data secure.

Association for Advancing Automation

Discover how Association for Advancing Automation can support your automation journey with their complete range of solutions and expertise.

Visit Company WebsiteI Went to Automatica 2025. Did You?

Mission CEO Scot Lindemann encourages others in the automation space to explore industry events outside their normal circuit.

EyePickIt: The smarter way to Robot Vision

EyePickIt offers precise, AI-driven robot vision solutions for automation. Perfect for pick-and-place, sorting, and quality control.